At some point last year, I watched Claude complete a task I had not fully described. I had asked it to help organize a project folder. It read the files, determined the structure I probably wanted, created subfolders, moved things around, and summarized what it had done. The whole thing took about a minute.

My first reaction was simply being amazed. My second was: Um. wait. What if I had not wanted that?

That question turned into a project. And that project turned into the Claude Governance Architecture I’ll talk about down below.

What I did not know when we started was that the architecture would need to change almost as fast as Claude was improving. That turns out to be the most important thing I can tell you.

At FutureBridge, we adopted Claude Cowork early and enthusiastically. We built internal applications, managed document libraries, generated client materials, and generally pushed the tool and ourselves as hard as we could. Claude is genuinely good at this. It is remarkably capable, and it actually does things, it does not just suggest them.

But agentic AI is a different category of tool. A calculator that gives you a wrong answer is annoying. An AI agent that acts on a wrong assumption can make changes, send messages, and move data before you have a chance to intervene. We figured this out not through disaster but through a series of smaller moments that added up to a clear conclusion: we needed a governance framework, and we needed to build it before we needed it.

So we built one with Claude Cowork.

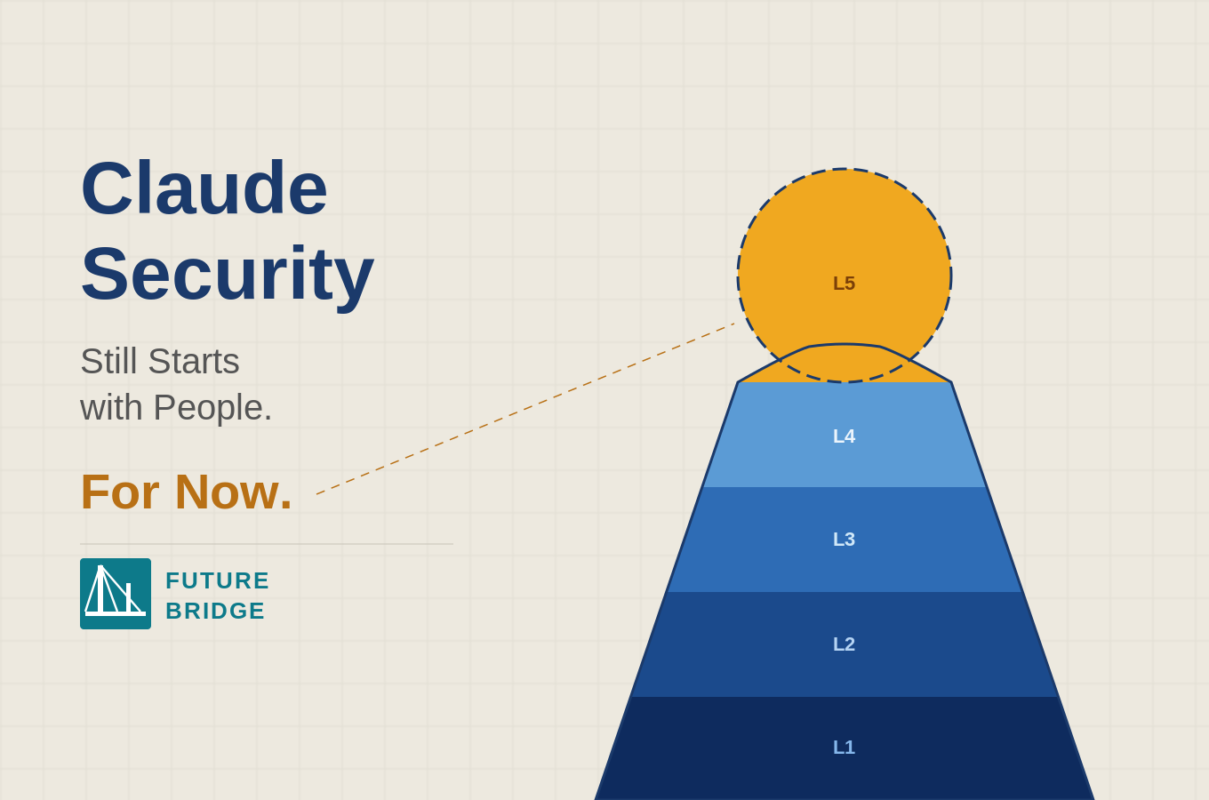

The idea: five filters, not one wall

Most people think of security as a perimeter. You build a wall, and things either get through or they don’t. That model made sense when the threat was external. It makes less sense when the tool you’re governing sits inside your organization, on your machines, running under your users’ credentials.

We designed the Claude Governance Architecture around a different metaphor. Think of it as a series of filters. A user’s request passes through five independent layers, and each one enforces policy on its own terms. If one fails or gets bypassed, the others still hold.

Here is what those layers look like in practice.

The outermost layer, Layer 1, is your existing enterprise security stack. Microsoft Purview, Symantec, Zscaler, whatever you already have. These tools scan all outbound network traffic regardless of what generated it. Claude does not get a special pass.

Layer 2 is the Claude platform itself. Anthropic gives you seat management, team limits, and account-level controls. This is where you manage who has access and what they can do at the account level.

Layer 3 is where we spent the most energy. This is the corporate proxy, and it is the architectural centerpiece of the whole system. Every Claude API call from every machine in your organization runs through it. It logs token counts, request and response content, user identity, and timestamps. It enforces rate limits per user, cost caps, and content policy rules. You can build it on Azure API Management, AWS API Gateway, Cloudflare Workers, or nginx. The infrastructure options are flexible. The principle is not: everything passes through here, and everything is logged.

Layer 4 sits on each user’s machine. This is a locally installed DLP skill that scans for sensitive data before anything leaves the workspace. PII, financial records, health data, confidential markings. It fires before you send a file, before you save content. It can also be triggered on demand to audit a full folder. On the same machine, alongside the DLP skill, lives the CLAUDE.md policy file, the always-on instructions that govern every Claude session on that machine.

Layer 5 is the end user. For now. More on that shortly.

Layers 3 and 4 look similar. They’re not.

People sometimes ask why we need both a corporate proxy and a local DLP skill when they both seem to catch the same kinds of problems. The answer is that they operate completely differently, and that difference is what makes the system resilient.

The corporate proxy is invisible to users. It intercepts API calls silently, logs everything, and enforces rate limits without anyone ever seeing it work. IT sees it. Users don’t. It is the silent watchdog.

The local DLP skill is visible to users by design. When it flags something, the session pauses and the user sees a prompt. They have to make a decision. That is not a bug; that is the point. One layer watches quietly from the center. The other creates a moment of accountability at the edge.

Neither one alone is sufficient. Both together mean there is no single point of failure.

People had to build this. Mostly.

Here is something the architecture diagram does not show you: every layer in this stack required people to create it, deploy it, and maintain it.

Take the DLP skill. It did not come out of a box. Someone had to design it, write it, and test it against real data patterns. That was a deliberate act of governance, completed before any user ever opened Cowork. Spoiler alert: Claude helped us write it and test it. The skill is only as good as the thinking that went into it, and some of that thinking was Claude’s.

Then someone had to install it. A DLP skill sitting in a repository is not governance. A DLP skill running on every machine in your organization, pointed at the right folders, configured correctly, is. IT leads had to deploy the standard plugin bundle, configure workspace paths, enforce folder access permissions at the OS level, and route all Claude API traffic through the proxy. If any of those steps gets skipped, the governance loop breaks before it starts. The machine does not know it is misconfigured. Only the person who provisioned it does. Though I do think we can have Claude run that check too.

And then there is the user. You can have the best technical architecture in the world, and if the person sitting at the keyboard does not understand the rules, the architecture is a locked door with the key left in the lock. We have a CLAUDE.md policy file that specifies the always-on instructions for every Claude session. It only works if users have read it, understood what it means, and internalized what it asks of them. That requires training, communication, and follow-through. None of that is automated. But let’s see if that changes anytime soon.

The architecture has three human touchpoints before it encounters a single technical control: the people who built the tools, the people who deployed them correctly, and the people who operate inside the boundaries they define. Remove any one and the system can break down.

On keeping people in the loop

Every governance piece you read right now will tell you that human judgment is the ultimate safeguard. Keep people in the loop. Never let the machine decide alone. I have written versions of that argument myself.

It is not wrong. It is just incomplete.

When the local DLP skill flags a potential data issue, the Cowork session pauses. The user gets a choice: cancel the action, redact the flagged content, review it line by line, or override the warning and proceed. If they override, that decision is logged and tied to their identity for compliance purposes. This is useful. It creates accountability and keeps decisions visible.

But here is what I have watched happen over the past year. Claude’s judgment has gotten better. The cases where a human override adds real value are narrowing. The cases where the pause is just friction, where the user clicks through because they already know the answer, are growing. The architecture we designed around human review made sense for Claude’s capabilities when we built it. It may not be the right design in twelve months.

We keep people in the loop because it is the right call today. We are watching carefully to understand where that loop needs to move.

Where we are, and why the map keeps changing

I want to be honest about where FutureBridge actually is, because the roadmap matters as much as the destination.

We have completed Phase 1: the foundation. Cowork is installed, the Governance folder exists, the local DLP skill is deployed. That part is done.

We are in Phase 2 now: control. We are publishing the CLAUDE.md policy file organization-wide, deploying the corporate proxy across all users, and expanding coverage systematically. Governance that lives only on a few machines is not really organizational governance.

Phase 3, which we have planned but not yet started, is about visibility. A token usage dashboard, automated override audit reports, compliance metrics tracked over time. Finance and Legal come into scope here. That is when governance becomes something you can measure and report on, not just something you hope is working.

But here is the thing about a three-phase roadmap when the underlying technology is moving this fast: the phases are right, and the destinations will shift. What we are building toward in Phase 3 will look different by the time we get there, because Claude will be different. New capabilities will open new risks. Some controls that feel essential today will feel like overkill tomorrow. Others we have not thought of yet will become necessary.

Governance is not a project you finish. It is a practice you keep revisiting, specifically because the thing you are governing keeps changing.

The conclusion I keep coming back to

The thing that surprised me most, working through all of this, is that the hardest part is not building the architecture. It is accepting that the architecture you build today is probably not the one you will need in a year.

We built a system with people at several key control points. That made sense for where Claude was when we started. Claude helped us design its own governance, which tells you something about where this is going. The question is not whether people should be in the loop. The question is which parts of the loop still need them, and for how long.

Agentic AI is genuinely powerful. That is not hype; I have seen it firsthand at FutureBridge. The governance has to be just as dynamic as the capability. A framework built for Claude today and left unchanged is not a governance framework. It is a snapshot.

We built the architecture. We are already revising it. That, more than anything else, is the point.

FutureBridge: Building the governed AI enterprise.